What Is a Scraper API? How It Works, Use Cases & Examples

The Scraper

Proxy Fundamentals

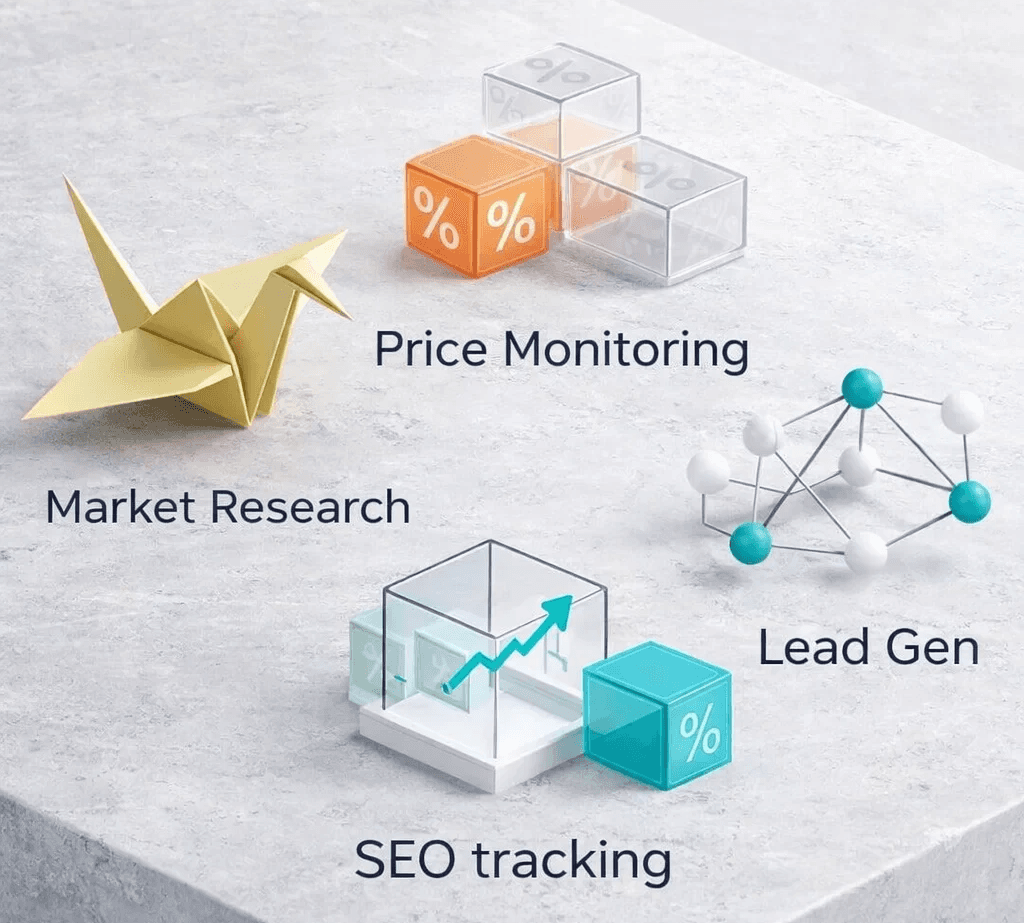

Organizations depend on web data for pricing intelligence, market research, lead generation, SEO tracking, and product analysis. Much of this information exists on public websites, yet collecting it manually requires time and constant monitoring.

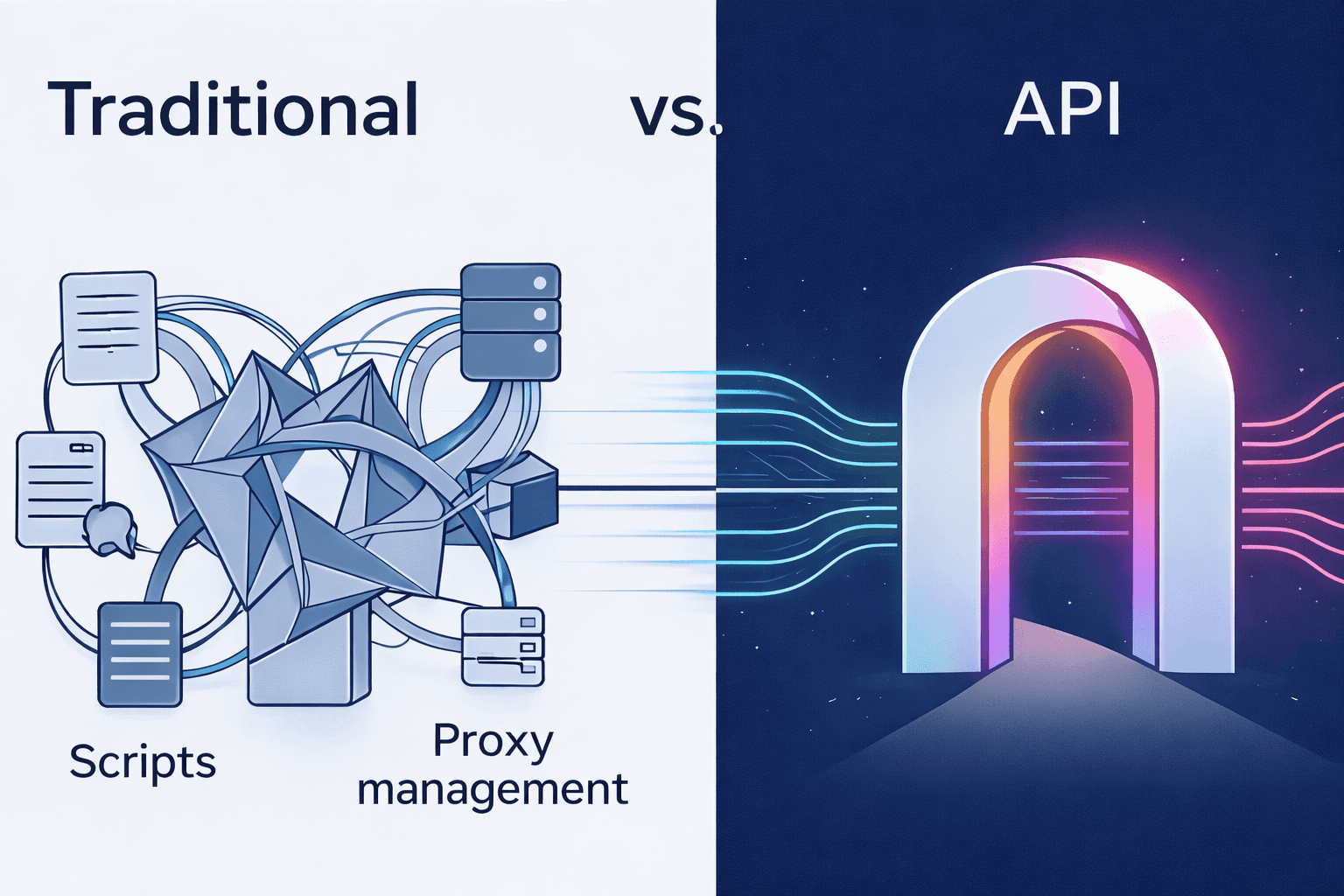

Automation helps solve this challenge through web scraping tools that extract data directly from websites. Traditional scraping setups require scripts, proxy management, CAPTCHA handling, and infrastructure maintenance, which can become complex as data needs increase.

A scraper API simplifies this process. Instead of building scraping systems internally, developers send a request to a web scraper API that retrieves webpage data and returns it through an API response. The service manages proxies, page rendering, and access restrictions behind the scenes.

Many companies use a data scraper API to collect information from e-commerce websites, search engines, directories, and other public sources at scale.

What Is a Scraper API?

A scraper API is a service that extracts data from websites through a simple API request. Developers send a webpage URL to the API and receive the page content or structured data in response.

Traditional web scraping often requires proxy management, browser rendering, and handling access restrictions. A web scraper API manages these tasks automatically, which simplifies large-scale data collection.

A scraper API typically provides features such as:

Website data extraction to retrieve page content such as products, reviews, or listings

Proxy rotation through a scraper API proxy network to reduce request blocking

JavaScript rendering to load dynamic webpages before extracting data

CAPTCHA handling to manage verification challenges

Structured responses such as HTML or JSON for easier processing

A data scraper API helps businesses collect large volumes of public web data without building and maintaining complex scraping infrastructure.

How Does a Scraper API Work?

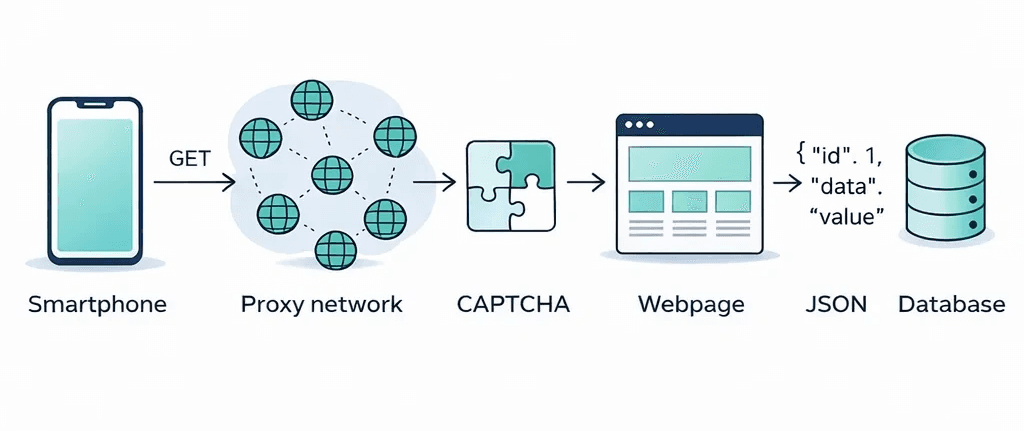

A scraper API retrieves website data through a structured process that handles requests, proxy routing, page rendering, and data delivery. Instead of interacting directly with the target website, the request passes through the API service, which manages the scraping process and returns the extracted content.

The process generally follows these steps:

Step 1: Send an API request

The user sends a request to the scraper API with the target website URL and an API key. This request tells the API which webpage needs to be collected.

Step 2: Route the request through proxies

The API routes the request through a rotating proxy network. A scraper API proxy system assigns different IP addresses to reduce the chance of blocks or rate limits.

Step 3: Access the target website

The API fetches the webpage from the target server. The request appears similar to a normal browser visit rather than an automated script.

Step 4: Render dynamic page content

Many websites load data through JavaScript. The web scraper API uses browser rendering technology to load the page completely before extracting the content.

Step 5: Handle protection mechanisms

The service detects anti-bot systems, rate limits, or CAPTCHA challenges and applies methods that allow the request to continue.

Step 6: Return the extracted data

Once the page loads successfully, the scraper API returns the data to the user. The response can contain HTML content, structured data, or parsed information.

This process allows developers to use a scrape API without building their own scraping infrastructure. The API manages access, reliability, and scaling while the developer focuses on analyzing the collected data.

Key Components of a Web Scraper API

A web scraper API relies on several technical components that allow it to collect website data reliably. These components work together to retrieve pages, handle restrictions, and return clean data to the user.

Below are the main elements that power most scraper API services.

1. Proxy network

A scraper API proxy network routes requests through multiple IP addresses. This reduces the chance of IP blocking and helps distribute requests across different locations. Proxy rotation also supports location-specific data collection when websites display different content for different regions.

2. Headless browser rendering

Many modern websites load content through JavaScript. A web scraper API uses headless browsers to load the page the same way a normal browser would. Once the page finishes loading, the API extracts the visible content.

3. CAPTCHA handling systems

Some websites present verification challenges to block automated access. A data scraper API detects CAPTCHA prompts and applies solving methods that allow the request to continue.

4. Request management and retries

Scraping large volumes of pages requires careful request control. Scraper APIs manage retry logic, request timing, and concurrency to keep data collection stable and reliable.

5. Output formatting

After collecting the webpage, the API returns the response in usable formats. Common output options include raw HTML, structured JSON data, parsed content, or page screenshots.

Together, these components allow a scraper API to collect website data efficiently without requiring developers to manage complex scraping infrastructure.

Types of Scraper APIs

Scraper APIs serve different data extraction needs depending on how websites deliver their content and how the collected data will be used. Some APIs return raw webpage content, while others provide structured or AI-processed data.

Understanding the types of scraper APIs helps developers choose the right tool for a specific data collection task.

1. HTML Scraper APIs

An HTML scraper API retrieves the raw source code of a webpage. The response usually contains the full HTML document, which developers can then parse to extract specific elements such as titles, product prices, links, or reviews.

This type of web scraper API works well when developers want full control over the extraction process.

Common use cases include:

• Extracting product listings from e-commerce pages

• Collecting article content from blogs or news sites

• Gathering metadata such as headings, links, and images

• Building custom parsers for structured datasets

HTML scraper APIs provide flexibility because developers can decide how the data should be processed after retrieval.

2. Structured Data Scraper APIs

A structured data scraper API returns information in an organized format instead of raw HTML. The API identifies key elements on a page and delivers them as structured fields such as JSON objects.

For example, an e-commerce scraping response may contain:

• product name

• price

• rating

• product description

• availability status

Structured responses reduce the need for manual parsing. Businesses often use a data scraper API when they need large datasets that can be analyzed or stored directly in databases.

3. AI Scraper APIs

An AI scraper API combines web scraping with artificial intelligence to extract and structure data automatically. Instead of writing parsing rules for each website, AI models analyze page content and identify the relevant information.

AI scraping systems can:

• detect product details automatically

• summarize webpage content

• convert unstructured text into structured data

• classify information across multiple sources

This approach works well for large datasets where website layouts vary widely.

4. Search Engine Scraper APIs

Search engine scraping APIs collect data from search engine results pages. These APIs extract information such as search rankings, advertisements, featured snippets, and related queries.

SEO teams often use this type of scraper API to monitor keyword rankings and analyze search results across different locations.

These APIs may return:

• organic search results

• featured snippet content

• local pack results

• related search suggestions

5. E-commerce Scraper APIs

An e-commerce scraper API focuses on extracting product data from online marketplaces and retail websites.

Common data points include:

• product titles

• pricing information

• reviews and ratings

• stock availability

• product descriptions

Businesses use this type of scraper API for price monitoring, product intelligence, and catalog analysis.

6. Social Media Scraper APIs

A social media scraper API collects publicly available information from social platforms.

Examples of extracted data include:

• user posts and comments

• engagement metrics

• profile information

• trending topics

Marketing teams use these APIs to track brand mentions, audience sentiment, and engagement patterns.

7. Real Estate Scraper APIs

A real estate scraper API extracts property listings from housing and rental platforms.

Typical data fields include:

• property price

• location details

• property type

• listing descriptions

• agent contact information

Property analytics platforms use these APIs to build real estate datasets and track housing market trends.

8. News and Content Scraper APIs

A news scraper API retrieves articles and editorial content from news websites, blogs, and media platforms.

These APIs collect information such as:

• article titles

• author names

• publication dates

• article body text

• tags and categories

Media monitoring platforms rely on these APIs to track industry news and analyze coverage trends.

Each type of scraper API addresses different data extraction needs. Some focus on retrieving raw webpage content, while others deliver structured datasets designed for analytics, research, or automation.

Benefits of Using a Scraper API

Organizations collect web data for research, analytics, monitoring, and automation. A scraper API helps simplify this process by removing the technical barriers associated with traditional scraping systems.

Below are the main benefits of using a web scraper API.

Faster development and deployment

Developers can begin extracting website data without building scraping infrastructure. A scrape API requires only a request with the target URL and API key, which reduces development time.

Built-in proxy management

A scraper API proxy network rotates IP addresses automatically. This helps prevent request blocking and allows data collection from multiple geographic locations.

Handles dynamic websites

Many websites load content through JavaScript. A web scraper API uses browser rendering technology to load the page fully before extracting the data.

Automatic handling of access restrictions

Websites often present CAPTCHA challenges or rate limits to stop automated traffic. A data scraper API detects these restrictions and applies methods that allow the request to continue.

Scalable data extraction

A scraper API allows organizations to collect data across thousands or millions of webpages. The API provider manages request distribution and infrastructure scaling.

Reliable data collection

Scraper APIs manage request retries, connection errors, and response validation. This improves the reliability of large scraping tasks.

Structured data delivery

Many APIs return extracted content in structured formats such as JSON. This allows businesses to store and analyze data without extensive parsing work.

These advantages make scraper APIs a practical solution for organizations that require consistent and scalable web data extraction.

Common Use Cases of Scraper APIs

Organizations use scraper APIs to collect structured web data for analytics, monitoring, automation, and research. A web scraper API allows businesses to extract information across many websites without building scraping infrastructure internally.

Below are some common applications of scraper APIs across industries.

1. Price Monitoring

Retailers and e-commerce platforms track competitor pricing to adjust their strategies. A scraper API collects product data across multiple marketplaces and retail sites.

Typical data collected includes:

• product prices

• discounts and offers

• inventory availability

• product ratings and reviews

This information helps companies monitor pricing trends and react quickly to market changes.

2. Market Research and Data Analysis

Businesses collect large datasets from websites to understand product demand, customer sentiment, and industry trends.

A data scraper API for market research helps gather information such as:

• product listings across marketplaces

• review sentiment and ratings

• feature comparisons between products

• category trends and demand signals

Research teams use these datasets to support decision making and product development.

3. SEO and Search Engine Monitoring

SEO tools rely on scraper APIs to collect search engine results data at scale. This allows them to monitor rankings and analyze search performance.

Typical extracted data includes:

• organic search results

• featured snippets

• advertisements in search results

• related search queries

A web scraper API enables large-scale keyword tracking across different locations and devices.

4. Lead Generation

Sales teams often collect business data from directories and listing platforms. A scraper API helps automate this process.

Examples of collected information include:

• company names

• business contact details

• industry categories

• company websites

This data helps build prospect lists and sales intelligence databases.

5. Social Media and Content Platforms

Many organizations monitor content across social and media platforms to track trends, engagement, and public discussions.

Scraper APIs can collect data from platforms such as:

• 9GAG

• Call2Friends

• Dailymotion

• DeviantArt

These datasets help analyze audience engagement, viral content trends, and community discussions.

6. Advertising and AdTech Intelligence

Advertising platforms and marketing analytics tools collect information about digital ads across websites and platforms.

An AdTech scraper API can help gather:

• advertising placements

• ad creatives and formats

• campaign visibility across platforms

This is commonly used in AdTech analytics and digital advertising monitoring.

7. App and Device Ecosystem Monitoring

Companies often analyze data across device ecosystems and streaming platforms to understand content availability and user experiences.

Scraper APIs can extract publicly available data from services such as:

• Android platforms

• Apple TV ecosystems

• ArenaVision content listings

This helps track content distribution, application listings, and service availability across platforms.

8. AI and Machine Learning Datasets

Machine learning systems require large volumes of structured data for training and analysis.

A scraper API helps collect datasets such as:

• articles and blog content

• product descriptions

• user reviews

• public listings and datasets

AI teams use this data to build training datasets for natural language processing and analytics models.

Web Scraper vs API Scraping: What’s the Difference?

Web data collection can happen through two main approaches: traditional web scraping and API-based data extraction. Both methods retrieve information from websites, yet they operate differently and require different levels of technical setup.

A traditional web scraper collects data by downloading webpage content and extracting elements from the HTML. An API scraper or scraper API retrieves data through an API request where the service handles the scraping process internally.

The comparison below explains the main differences.

Feature | Traditional Web Scraper | Scraper API |

Infrastructure | Requires developers to build and maintain scraping infrastructure | Infrastructure managed by the API provider |

Proxy management | Developers must configure rotating proxies | Proxy rotation handled automatically through scraper API proxy networks |

JavaScript rendering | Requires headless browsers and additional setup | Built-in browser rendering handled by the web scraper API |

CAPTCHA handling | Developers must integrate CAPTCHA solving tools | CAPTCHA detection and handling managed by the API service |

Development effort | Requires scripting, proxy setup, and maintenance | Only requires sending a request to the scrape API |

Scalability | Scaling requires more servers and proxy resources | Designed to scale requests across distributed systems |

Reliability | Blocks and request failures require manual handling | Automatic retries and request management |

Data output | Developers must parse raw HTML manually | Many data scraper APIs return structured data |

Traditional scraping still works well for small projects or custom extraction workflows. A scraper API works better for large-scale data extraction where reliability, proxy management, and infrastructure stability matter.

Businesses often choose a web scraper API because it simplifies data collection while reducing the time required to maintain scraping systems.

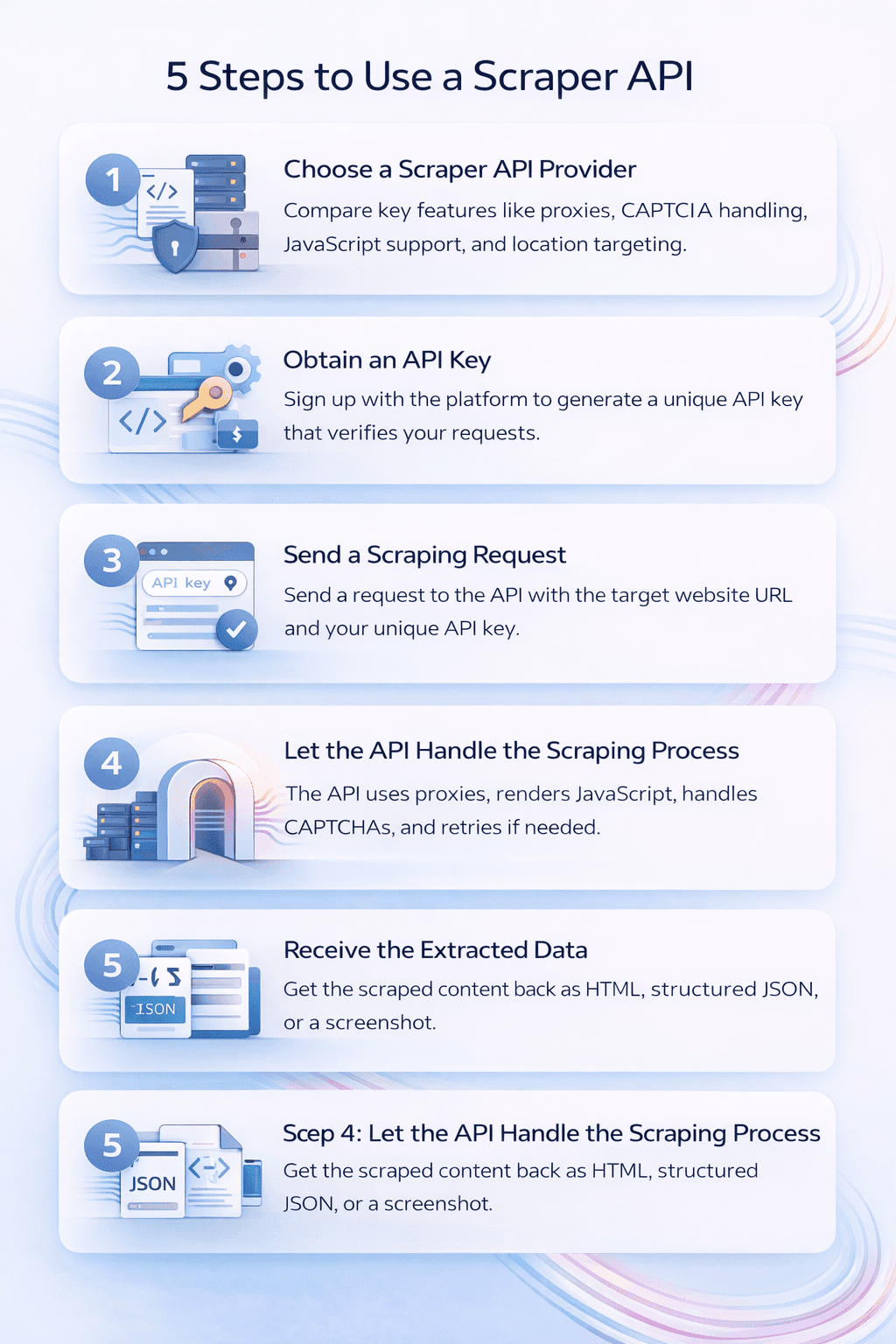

How to Use a Scraper API (Step-by-Step Guide)

A scraper API allows developers to collect webpage data with a simple request instead of building a full scraping system. Most services follow a similar workflow where the user sends a request and the API returns the extracted content.

The steps below explain how to use a scraper API for web data extraction.

Step 1: Choose a Scraper API Provider

The first step involves selecting a reliable web scraper API service. Different providers offer varying capabilities depending on the type of data you want to collect.

Key features to evaluate include:

• proxy network coverage

• JavaScript rendering support

• CAPTCHA handling

• global location targeting

• response formats such as HTML or JSON

Choosing the right data scraper API ensures stable and consistent data collection.

Step 2: Obtain an API Key

Most scraper API platforms require authentication through an API key. After creating an account, the platform generates a unique key that authorizes your requests.

The API key helps the provider:

• verify request ownership

• manage request limits

• track usage and billing

Developers include this key in every request sent to the scrape API.

Step 3: Send a Scraping Request

Once the API key is available, developers can send a request that contains the target webpage URL.

A basic request typically includes:

• the target website URL

• the API key

• optional parameters such as location or rendering settings

The api scraper receives the request and begins collecting the webpage content.

Step 4: Let the API Handle the Scraping Process

After receiving the request, the scraper API performs the scraping tasks automatically. The system manages several processes behind the scenes.

These processes may include:

• routing requests through rotating proxy networks

• rendering pages that rely on JavaScript

• handling CAPTCHA challenges

• retrying failed requests

This automation allows developers to focus on the data rather than scraping infrastructure.

Step 5: Receive the Extracted Data

Once the page loads successfully, the scraper API returns the data to the user through an API response.

The response may contain:

• raw HTML content

• structured JSON data

• extracted page elements

• screenshots of the webpage

Developers can then store the data in databases, process it for analytics, or integrate it into applications.

Using a scrape API simplifies large-scale data collection and reduces the complexity of traditional web scraping workflows.

Challenges in Web Scraping Without Scraper APIs

Building a scraping system without a scraper API requires developers to manage multiple technical components. Each of these components demands time, infrastructure, and constant maintenance.

Below are some of the most common challenges faced when collecting web data through traditional scraping methods.

IP blocking and rate limits

Websites often restrict repeated requests from the same IP address. Without a scraper API proxy network, scraping tools may face frequent blocks after sending multiple requests.

Proxy infrastructure management

Developers must configure and maintain large proxy pools to distribute requests across different IP addresses. Managing proxy reliability and rotation can become complex as scraping scale increases.

CAPTCHA verification challenges

Many websites deploy CAPTCHA systems to prevent automated access. Traditional scraping scripts must integrate CAPTCHA solving services, which increases cost and complexity.

Dynamic website rendering

Modern websites load content through JavaScript. Without headless browser support, scraping scripts may retrieve incomplete page content because the data loads after the initial request.

Request failure handling

Network errors, page load failures, and blocked requests can interrupt scraping jobs. Developers must build retry mechanisms and monitoring systems to maintain stable data collection.

Infrastructure scaling

Large scraping operations require multiple servers, proxy resources, and browser instances. Scaling this infrastructure internally can increase operational overhead.

A web scraper API reduces these challenges by handling proxy rotation, request retries, browser rendering, and anti-bot detection automatically. This allows teams to focus on data extraction rather than infrastructure maintenance.

Choosing the Right Scraper API

Not every scraper API offers the same level of performance, reliability, or flexibility. The right choice depends on the type of websites you need to extract data from, the scale of your requests, and the format in which you need the output.

Before selecting a provider, review the points below.

Proxy coverage: A strong scraper API proxy network helps reduce blocks and supports location-based data collection. Check if the provider offers broad geographic coverage and stable IP rotation.

JavaScript rendering support: Many websites load content after the initial page request. Choose a web scraper API that can render JavaScript pages fully before returning the content.

CAPTCHA and anti-bot handling

Some targets use access controls that stop automated requests. A reliable scraper API should handle these restrictions without requiring extra tools on your side.

Response formats

Different projects require different outputs. Some teams need raw HTML, while others need structured JSON or parsed fields. Pick a data scraper API that supports the format your workflow needs.

Scalability and request limits

Large projects require stable performance across thousands of requests. Review usage limits, concurrency support, and retry handling before making a decision.

Documentation and ease of use

Good documentation reduces setup time and makes integration smoother. Clear request examples and setup guides can save development time.

Choosing the right scraper API helps improve reliability, reduce manual work, and support long-term data collection goals.

Using the Evomi Scraper API for Web Data Extraction

Platforms such as the Evomi Scraper API provide a managed solution for collecting data from websites without building a full scraping system. Instead of managing proxies, browser automation, and anti-bot handling manually, developers send a simple API request with the target URL and receive the extracted page data in response.

The platform is designed to remove many of the technical barriers involved in large-scale scraping. Its infrastructure automatically manages IP rotation, browser rendering, and request retries so teams can focus on analyzing data rather than maintaining scraping tools.

Some capabilities offered by this scraper API include:

Automatic anti-bot handling: The system can bypass protection systems such as Cloudflare, DataDome, and PerimeterX while also solving CAPTCHA challenges automatically.

JavaScript rendering through headless browsers: Many websites load content dynamically. The API uses browser-based rendering to fully load pages before returning the data.

Proxy rotation and geo-targeting: Requests are routed through rotating proxy networks across many global locations, which reduces blocking and allows location-specific data collection.

AI-assisted data extraction: The platform can transform raw HTML into structured or summarized data through built-in AI processing features.

Flexible output formats: Scraped results can be delivered as HTML, Markdown, JSON responses, or even screenshots of the webpage.

Developers integrate the API by sending a request that includes the target webpage URL and an API key. The service then retrieves the page and returns the extracted content through the response, which can be stored or processed by applications.

Using a platform such as this allows businesses and developers to build data pipelines, monitor websites, collect datasets for research, and automate large-scale data collection workflows with minimal infrastructure management.

Start Scraping Web Data Easily

Looking for a reliable way to collect web data at scale? Explore the Evomi Scraper API to automate data extraction, handle proxies and anti-bot systems, and retrieve website data through a simple API request.

Conclusion

A scraper API simplifies web data extraction by removing the need to manage proxies, browsers, and scraping infrastructure. Developers can send a request with a target URL and receive the webpage data through a clean API response.

Organizations rely on scraper APIs to collect large datasets for market research, price monitoring, SEO analysis, lead generation, and analytics. These tools help scale data collection while maintaining reliability and stability.

As data demand continues to grow, a web scraper API provides an efficient way to extract public information from websites and convert it into usable datasets for business and technology applications.

Author

The Scraper

Engineer and Webscraping Specialist

About Author

The Scraper is a software engineer and web scraping specialist, focused on building production-grade data extraction systems. His work centers on large-scale crawling, anti-bot evasion, proxy infrastructure, and browser automation. He writes about real-world scraping failures, silent data corruption, and systems that operate at scale.