The Quiet Winners of the AI Boom

The Scraper

Proxy Fundamentals

When the tech world discusses the AI revolution, the spotlight follows the loudest voices: the model creators, the GPU manufacturers, and the cloud titans.

Names like OpenAI, NVIDIA, and Google dominate the headlines. Their neural networks and H100 clusters are framed as the sole engines of progress. But beneath the hype of "compute" lies a quieter, more foundational layer of the ecosystem that receives a fraction of the attention despite being equally critical.

That layer is Data Extraction. And most of it starts in a place people rarely consider: Web Scraping.

AI Doesn’t Run on Compute Alone

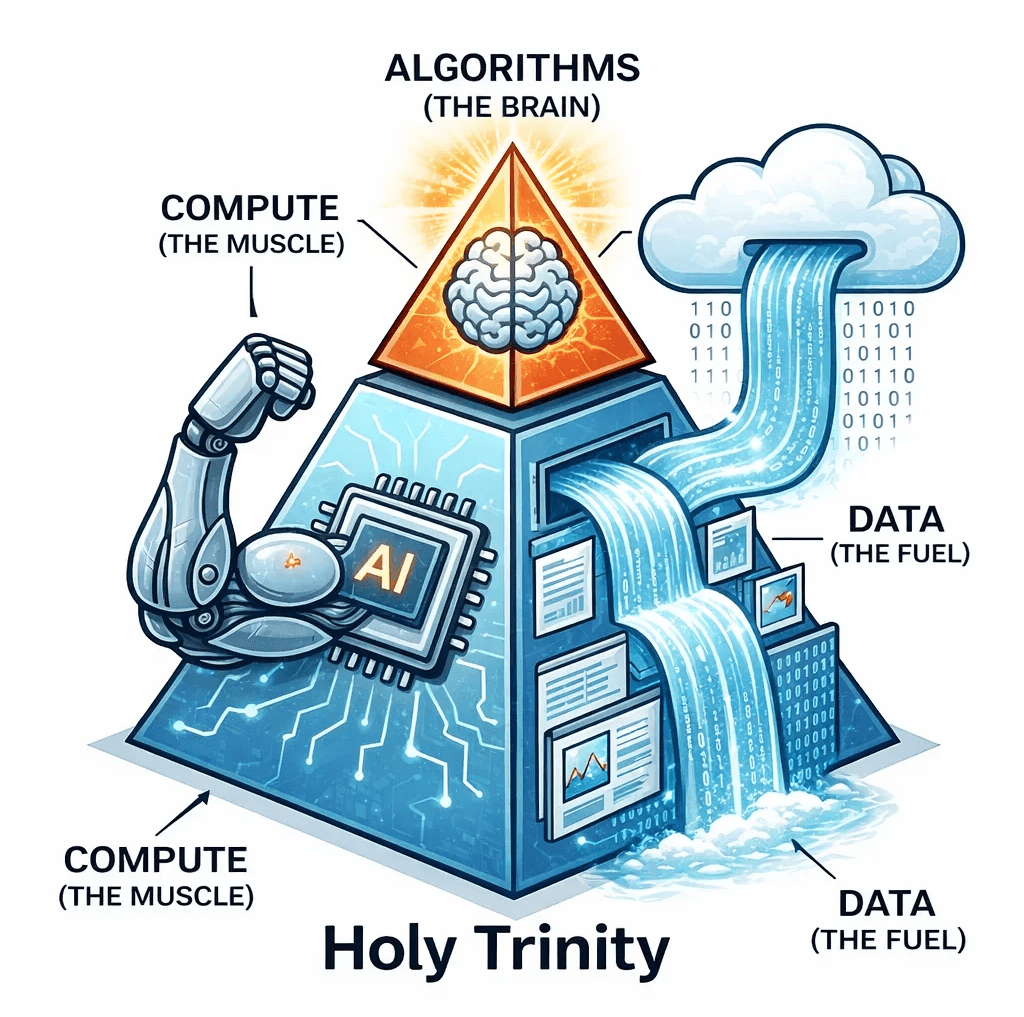

Training a Large Language Model (LLM) requires a holy trinity:

Algorithms (The brain)

Compute (The muscle)

Data (The fuel)

Compute gets the glory. Data rarely does. Yet, without massive, diverse datasets, even the most powerful cluster of GPUs is just an expensive space heater.

LLMs need trillions of tokens to understand human nuance. Vision models require billions of labeled images. Where does this data live? It lives in the "wild", on forums, documentation, marketplaces, and social platforms. Gathering this at scale isn't a manual task; it requires sophisticated, automated systems capable of breathing in the entire public internet.

The Invisible Infrastructure

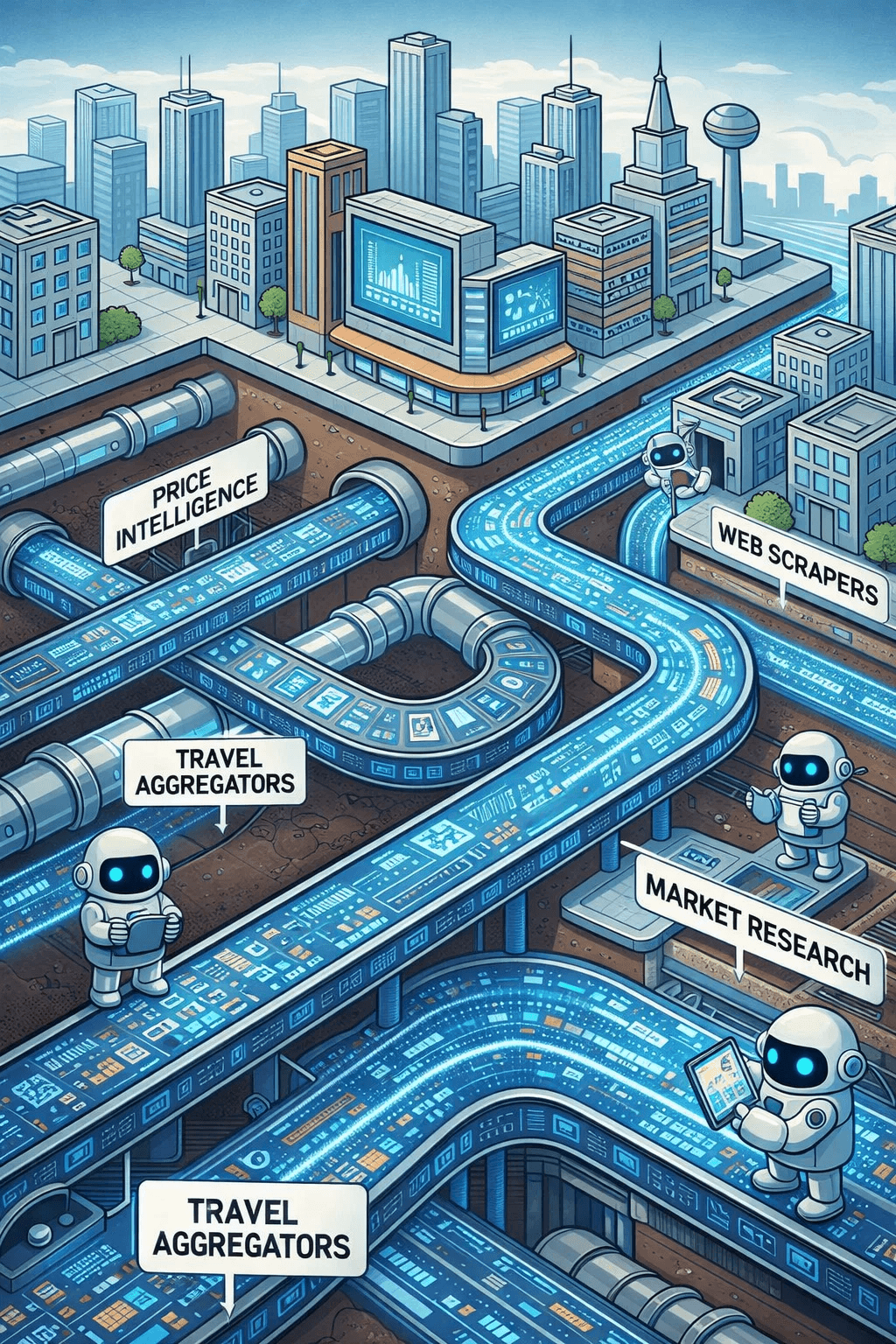

Long before "AI" was a buzzword, large-scale web scraping was already the silent backbone of the digital economy:

Price Intelligence: Monitoring millions of e-commerce SKUs.

Travel Aggregators: Real-time tracking of flight and hotel availability.

Market Research: Analyzing consumer sentiment across a thousand blogs.

Today, these same technologies have been repurposed as the "feeding tubes" for AI. The challenges that scraping engineers have solved for a decade, bypassing sophisticated anti-bot systems, rendering dynamic JavaScript, and handling shifting site architectures, are now the exact hurdles facing AI labs.

Data Pipelines: The New Oil Rigs

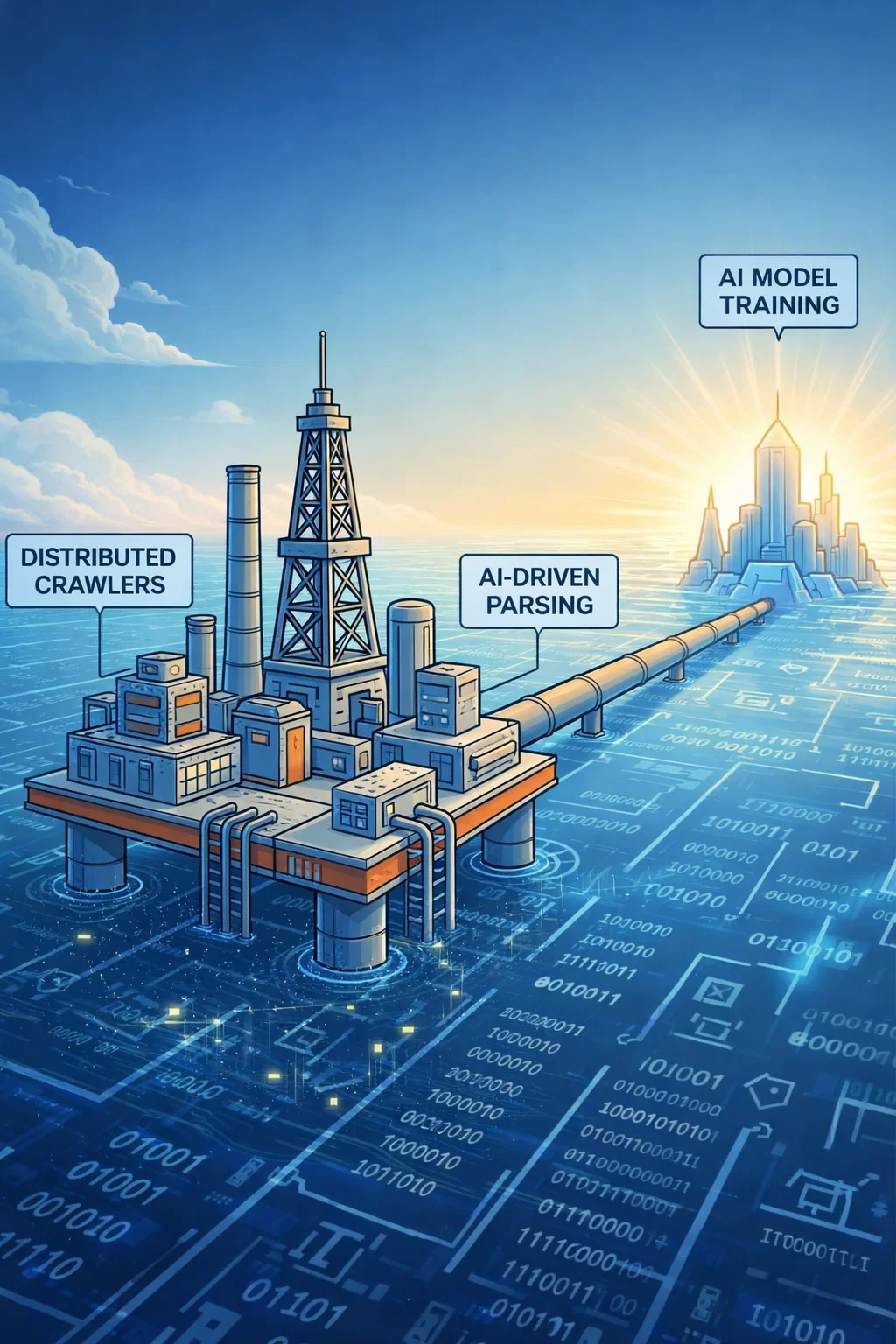

If data is the "new oil," then scraping systems are the offshore rigs.

Data doesn’t just appear in a clean, usable format. It must be discovered, extracted, and refined. This has turned the "Scraping Stack" into a high-stakes strategic asset. Modern pipelines now require:

Distributed Crawlers: To handle massive throughput.

Browser Automation: To mimic human behavior and bypass blocks.

Proxy Networks: To distribute requests and avoid IP throttling.

AI-Driven Parsing: Using small models to structure the very data used to train large ones.

Frameworks like Scrapy and Crawlee aren't just developer tools anymore; they are the logistics networks of the intelligence age.

The Real Winners

While the world watches NVIDIA’s stock price, the "Quiet Winners" are those building the extraction infrastructure:

Proxy Network Providers: The gatekeepers of web access.

Residential IP Networks: Essential for bypassing geo-blocks and bot detection.

Managed Scraping Platforms: Companies that turn "unstructured web" into "structured API."

These players rarely make the front page of the Wall Street Journal, but their role is becoming central as we hit the "Data Wall", the point where high-quality human-generated data becomes scarce and harder to find.

The Next Phase: The Fight for Freshness

The next phase of the AI economy won't just be about more data. It will be about real-time data.

Static datasets grow stale. To be useful, AI needs the web as it exists now, today’s news, tonight’s prices, this morning’s code commits. This demand will drive a new evolution in scraping technology: smarter, more resilient, and more autonomous.

The AI revolution is defined by the models we see. But it is powered by the infrastructure that knows how to collect the world.

Author

The Scraper

Engineer and Webscraping Specialist

About Author

The Scraper is a software engineer and web scraping specialist, focused on building production-grade data extraction systems. His work centers on large-scale crawling, anti-bot evasion, proxy infrastructure, and browser automation. He writes about real-world scraping failures, silent data corruption, and systems that operate at scale.