The Browser Is Being Rewritten for Scraping

The Scraper

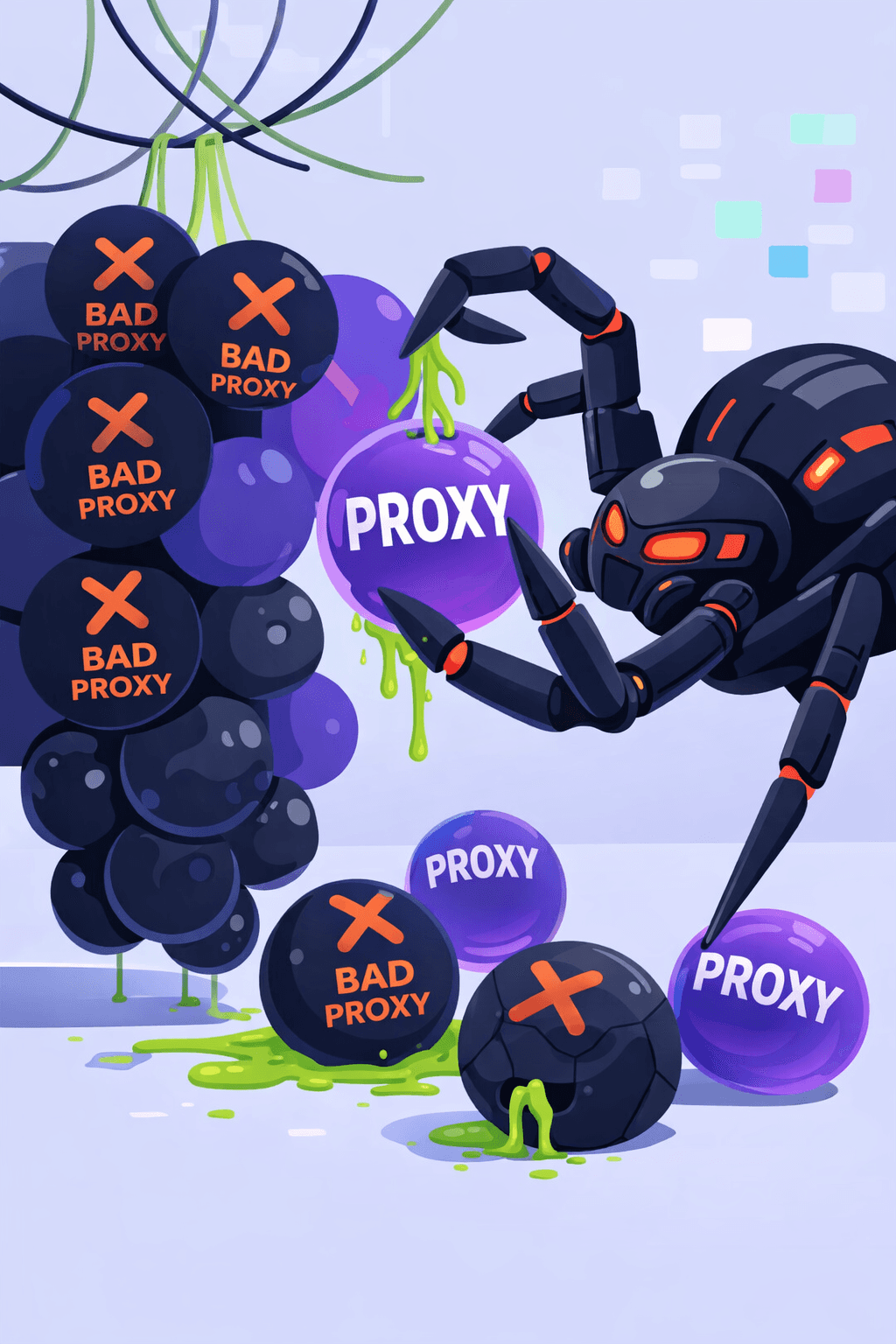

Proxy Fundamentals

For years, the web scraping playbook was predictable:

Spin up Puppeteer or Playwright.

Run a headless instance of Chromium.

Inject a stealth plugin.

Rotate residential proxies.

Cross your fingers.

For a long time, it worked. But the cat-and-mouse game has reached an inflection point. Modern anti-bot solutions like Cloudflare, Akamai, and DataDome no longer just detect bots, they profile them. They analyze the entire stack, looking for the tiny "uncanny valley" inconsistencies between a real user's browser and an automated one.

We’ve pushed the "patched browser" model to its breaking point. Now, instead of trying to fix Chrome, we’re starting to rebuild it from the engine up.

The Old World: Automating a Black Box

The first generation of large-scale scraping was built on top of stock browsers. Tools like Puppeteer made it easy to control a browser programmatically, but they inherited a fundamental flaw: Chrome was never designed to be a bot.

Engineers spent years performing "surgical" patches to make Chromium look human:

Overriding

navigator.webdriverflags.Spoofing WebGL renderer strings.

Patching broken JavaScript execution contexts.

Injecting "stealth" scripts to mask automation leaks.

It was a game of Whac-A-Mole. Every time a new browser version dropped, a new leak appeared. Anti-bot systems evolved faster than the patches because they started looking deeper, at the TLS handshake, HTTP/2 frames, and TCP/IP fingerprints.

The Breaking Point: Data Poisoning

Today, detection is holistic. Websites correlate signals across multiple layers:

Consistency: Does the User-Agent match the browser’s internal capabilities?

Signatures: Does the TLS fingerprint match a standard Chrome build?

Behavior: Is the mouse movement "too linear" or the session continuity broken?

Reputation: Is the IP linked to a known data center?

When these layers don’t align, you get flagged. But you might not get blocked. Instead, you face controlled data poisoning: the page loads, but the data is empty, prices are slightly altered, or the session silently degrades. This makes debugging a nightmare.

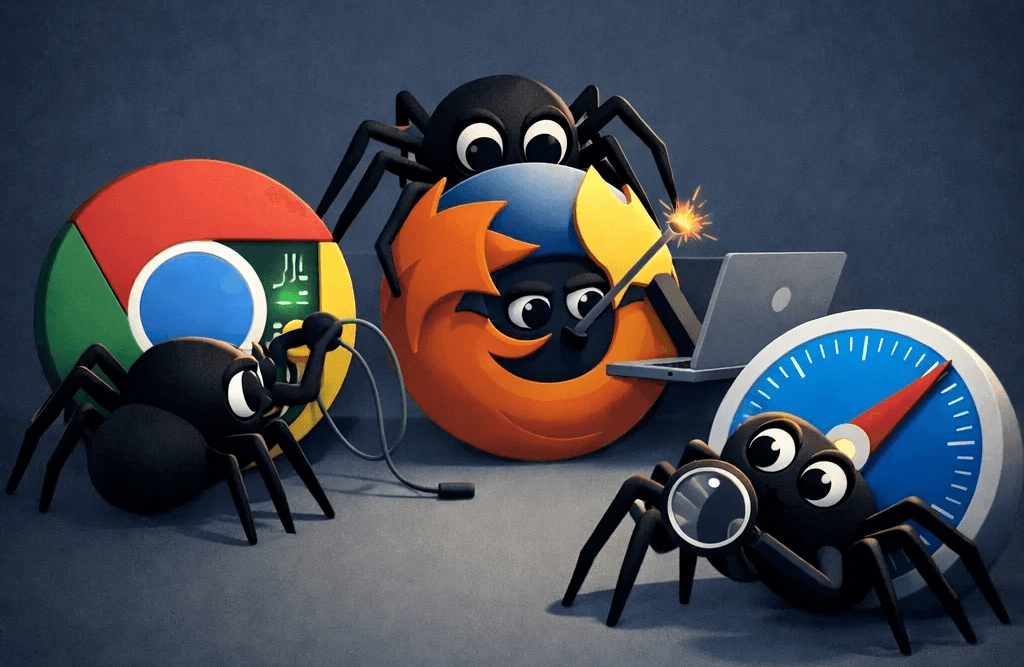

The New Wave: Browsers Built for Automation

We are seeing a fundamental shift with projects like Camoufox (Firefox-based) and Lightpanda. These aren't just wrappers; they are browsers redesigned for the scraping era.

1. Engine-Level Masking

Instead of layering "stealth" scripts on top of the browser (which can be detected by the very scripts they try to hide), these tools handle fingerprinting at the C++ engine level. The browser doesn't pretend to be a real user; it is a real user as far as the JavaScript environment is concerned.

2. Stripped and Optimized

Standard Chrome carries massive overhead (extensions, sync services, telemetry). New-age automation browsers strip the bloat, optimizing for performance and memory efficiency at scale. This turns scraping from a "brute force" problem into an infrastructure-efficient process.

3. Native Automation

The question has shifted. We no longer ask: "How do we hide this bot?" We ask: "How do we build a system that generates mathematically consistent fingerprints by default?"

The Missing Link: The Network Identity

Even with a "perfect" browser, your stack remains vulnerable at the network layer. This is where most developers fail. You can have a perfect browser fingerprint, but if your network signature tells a different story, you’re toast.

Anti-bot systems evaluate Browser + Network + Behavior as a single identity. If your browser claims to be a Windows user in New York, but your IP:

Originates from a flagged Data Center ASN.

Has a high "neighbor" noise (other bots on the same subnet).

Displays a mismatched TCP/IP stack.

...the browser's "perfect" fingerprint becomes irrelevant.

Where Evomi Fits In: Strategic Alignment

High-quality proxy infrastructure is no longer a luxury; it’s a multiplier. Using a provider like Evomi transforms your proxies from disposable tools into a core part of your bot's identity.

By pairing a purpose-built browser (like Camoufox) with Evomi’s high-integrity network, you achieve stack alignment:

Geographic Consistency: Match your browser’s locale and timezone to the IP's location.

Clean Reputation: Use residential IPs with low fraud scores to avoid immediate scrutiny.

Stable Sessions: Maintain session continuity without IP jumps that trigger security challenges.

The New Scraping Stack

The industry is moving away from simple scripts and toward coordinated systems. The new stack looks like this:

A Purpose-Built Browser Engine: For consistent, engine-level fingerprinting.

High-Quality Proxy Network (Evomi): For a clean, matching network identity.

Session-Aware Logic: To manage cookies and state intelligently.

Observability: To monitor for silent data poisoning.

Final Thought

We are moving from an era of hiding to an era of architecting. Scraping is becoming a systems engineering problem rather than a scripting problem.

The teams that will succeed aren't the ones writing the cleverest "stealth" patches, they are the ones building aligned, end-to-end systems that provide the transparency and reliability modern data collection demands.

For years, the web scraping playbook was predictable:

Spin up Puppeteer or Playwright.

Run a headless instance of Chromium.

Inject a stealth plugin.

Rotate residential proxies.

Cross your fingers.

For a long time, it worked. But the cat-and-mouse game has reached an inflection point. Modern anti-bot solutions like Cloudflare, Akamai, and DataDome no longer just detect bots, they profile them. They analyze the entire stack, looking for the tiny "uncanny valley" inconsistencies between a real user's browser and an automated one.

We’ve pushed the "patched browser" model to its breaking point. Now, instead of trying to fix Chrome, we’re starting to rebuild it from the engine up.

The Old World: Automating a Black Box

The first generation of large-scale scraping was built on top of stock browsers. Tools like Puppeteer made it easy to control a browser programmatically, but they inherited a fundamental flaw: Chrome was never designed to be a bot.

Engineers spent years performing "surgical" patches to make Chromium look human:

Overriding

navigator.webdriverflags.Spoofing WebGL renderer strings.

Patching broken JavaScript execution contexts.

Injecting "stealth" scripts to mask automation leaks.

It was a game of Whac-A-Mole. Every time a new browser version dropped, a new leak appeared. Anti-bot systems evolved faster than the patches because they started looking deeper, at the TLS handshake, HTTP/2 frames, and TCP/IP fingerprints.

The Breaking Point: Data Poisoning

Today, detection is holistic. Websites correlate signals across multiple layers:

Consistency: Does the User-Agent match the browser’s internal capabilities?

Signatures: Does the TLS fingerprint match a standard Chrome build?

Behavior: Is the mouse movement "too linear" or the session continuity broken?

Reputation: Is the IP linked to a known data center?

When these layers don’t align, you get flagged. But you might not get blocked. Instead, you face controlled data poisoning: the page loads, but the data is empty, prices are slightly altered, or the session silently degrades. This makes debugging a nightmare.

The New Wave: Browsers Built for Automation

We are seeing a fundamental shift with projects like Camoufox (Firefox-based) and Lightpanda. These aren't just wrappers; they are browsers redesigned for the scraping era.

1. Engine-Level Masking

Instead of layering "stealth" scripts on top of the browser (which can be detected by the very scripts they try to hide), these tools handle fingerprinting at the C++ engine level. The browser doesn't pretend to be a real user; it is a real user as far as the JavaScript environment is concerned.

2. Stripped and Optimized

Standard Chrome carries massive overhead (extensions, sync services, telemetry). New-age automation browsers strip the bloat, optimizing for performance and memory efficiency at scale. This turns scraping from a "brute force" problem into an infrastructure-efficient process.

3. Native Automation

The question has shifted. We no longer ask: "How do we hide this bot?" We ask: "How do we build a system that generates mathematically consistent fingerprints by default?"

The Missing Link: The Network Identity

Even with a "perfect" browser, your stack remains vulnerable at the network layer. This is where most developers fail. You can have a perfect browser fingerprint, but if your network signature tells a different story, you’re toast.

Anti-bot systems evaluate Browser + Network + Behavior as a single identity. If your browser claims to be a Windows user in New York, but your IP:

Originates from a flagged Data Center ASN.

Has a high "neighbor" noise (other bots on the same subnet).

Displays a mismatched TCP/IP stack.

...the browser's "perfect" fingerprint becomes irrelevant.

Where Evomi Fits In: Strategic Alignment

High-quality proxy infrastructure is no longer a luxury; it’s a multiplier. Using a provider like Evomi transforms your proxies from disposable tools into a core part of your bot's identity.

By pairing a purpose-built browser (like Camoufox) with Evomi’s high-integrity network, you achieve stack alignment:

Geographic Consistency: Match your browser’s locale and timezone to the IP's location.

Clean Reputation: Use residential IPs with low fraud scores to avoid immediate scrutiny.

Stable Sessions: Maintain session continuity without IP jumps that trigger security challenges.

The New Scraping Stack

The industry is moving away from simple scripts and toward coordinated systems. The new stack looks like this:

A Purpose-Built Browser Engine: For consistent, engine-level fingerprinting.

High-Quality Proxy Network (Evomi): For a clean, matching network identity.

Session-Aware Logic: To manage cookies and state intelligently.

Observability: To monitor for silent data poisoning.

Final Thought

We are moving from an era of hiding to an era of architecting. Scraping is becoming a systems engineering problem rather than a scripting problem.

The teams that will succeed aren't the ones writing the cleverest "stealth" patches, they are the ones building aligned, end-to-end systems that provide the transparency and reliability modern data collection demands.

Author

The Scraper

Engineer and Webscraping Specialist

About Author

The Scraper is a software engineer and web scraping specialist, focused on building production-grade data extraction systems. His work centers on large-scale crawling, anti-bot evasion, proxy infrastructure, and browser automation. He writes about real-world scraping failures, silent data corruption, and systems that operate at scale.